As AI adoption accelerates across every industry, privacy, legal, and marketing teams face growing pressure to understand emerging technologies and the risks they introduce. This webinar sets the stage by clarifying the latest AI trends shaping the regulatory landscape and the operational implications for organizations seeking to innovate responsibly.

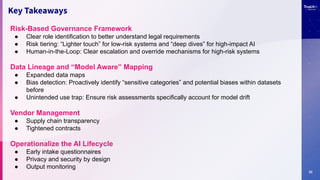

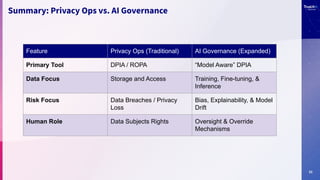

In this session, our experts will break down the evolving world of AI governance—what it means in practice, why it matters now, and how to fit AI governance into privacy operations to ensure scalable, compliant, and efficient processes. You’ll gain a clear view of the challenges ahead, from algorithmic transparency to data lifecycle management, and understand how forward-thinking practitioners are preparing their organizations.

This webinar will review:

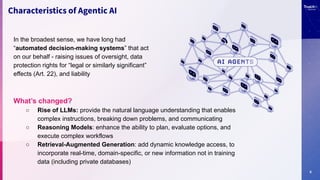

- How key AI trends are reshaping risk, compliance, and data governance expectations

- A deep dive into agentic AI: what the technology is, what risks are associated with it, and how companies can manage these concerns.

- Practical steps to integrate AI governance into existing Privacy Ops workflows

- Emerging tools and methods to evaluate and manage AI-related risks

- Insights from seasoned AI and privacy professionals on operationalizing governance at scale

Join us to strengthen your expertise, stay ahead of accelerating regulatory change, and gain actionable strategies you can apply immediately.